- What is Configuration Management?

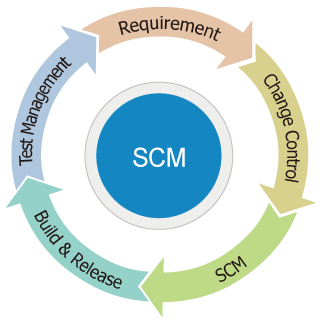

- Software Configuration Management (SCM) is a system for managing the evolution of software products, both during the initial stages of development and during all stages of maintenance. A software product encompasses the complete set of computer programs, procedures, and associated documentation and data designated for delivery to a user. All supporting software used in the development, even though not part of the software product, should also be controlled by SCM.

- The general goal of test management is to allow teams to plan, develop, execute, and assess all testing activities within the overall software development effort. This includes coordinating efforts of all those involved in the testing effort, tracking dependencies and relationships among test assets and, most importantly, defining, measuring, and tracking quality goals.

- Test planning is the overall set of tasks that address the questions of why, what, where, and when to test. The reason why a given test is created is called a test motivator. What should be tested is broken down into many test cases for a project. Where to test is answered by determining and documenting the needed software and hardware configurations. When to test is resolved by tracking iterations (or cycles, or time period) to the testing.

- Test authoring is a process of capturing the specific steps required to complete a given test. This addresses the question of how something will be tested. This is where somewhat abstract test cases are developed into more detailed test steps, which in turn will become test scripts (either manual or automated).

- Test execution entails running the tests by assembling sequences of test scripts into a suite of tests. This is a continuation of answering the question of how something will be.

- Test reporting is how the various results of the testing effort are analyzed and communicated. This is used to determine the current status of project testing, as well as the overall level of quality of the application or system.

- What is Requirement-based Test Management?

- Many studies show that majority of software projects fail to achieve schedule and budget goals. The poor quality of software is one of the main reasons behind many failures. These often result in rework of application requirements, design and code. Experience and numerous studies show that: behind poor software quality are defects in requirements specifications and problematic system test coverage. That is, low quality of input causes low quality of output no matter how project team is experienced, which methodology is used and which budget and timeline constraints are established.

source : Requirements-based Testing Process in Practice, 2010

[Figure 1. Distribution of defects in software projects]

- According to James Martin the root causes of 56 percent of all defects identified in software projects are introduced in the requirements phase (Fig. 1). About 50 percent of requirements defects are the result of poorly written, unclear, ambiguous, and incorrect requirements.

- The requirements-based testing (RBT) process addresses two major issues: first, validating that the requirements are correct, complete, unambiguous, and logically consistent; and second, designing a necessary and sufficient (from a black box perspective) set of test cases from those requirements to ensure that the design and code fully meet those requirements.

- The overall RBT strategy is to integrate testing throughout the development life cycle and focus on the quality of the Requirements Specification. This leads to early defect detection which has been shown to be much less expensive than finding defects during integration testing or later.

- To put the RBT process into perspective, testing can be divided into the following eight activities:

- 1. Define Test Completion Criteria

- The test effort has specific, quantitative and qualitative goals. Testing is completed only when the goals have been reached

(e.g., testing is complete when all functional variations, fully sensitized for the detection of defects, and 100% of all statements and branch vectors have executed successfully in single run or set of runs with no code changes in between).

- 2. Design Test Cases

- Logical test cases are defined by five characteristics: the initial state of the system prior to executing the test, the data in the data base, the inputs, the expected outputs, and the final system state.

- 3. Build Test Cases

- There are two parts needed to build test cases from logical test cases: creating the necessary data, and building the components to support testing

(e.g., build the navigation to get to the portion of the program being tested).

- 4. Execute Tests

- Execute the test-case steps against the system being tested and document the results.

- 5. Verify Test Results

- Verify that the test results are as expected.

- 6. Verify Test Coverage

- Track the amount of functional coverage and code coverage achieved by the successful execution of the set of tests.

- 7. Manage and Track Defects

- Any defects detected during the testing process are tracked to resolution. Statistics are maintained concerning the overall defect trends and status.

- 8. Manage the Test Library

- The test manager maintains the relationships between the test cases and the programs being tested. The test manager keeps track of what tests have or have not been executed, and whether the executed tests have passed or failed.